What is Edge Compute?

Edge compute nodes are localized, virtualized compute resources deployed near enterprise or user locations.

Edge computing was originally conceived to host latency-sensitive mobile network functions close to radio access networks (RAN) as these networks virtualized. Edge compute is now considered critical infrastructure for cloud-native 5G networks.

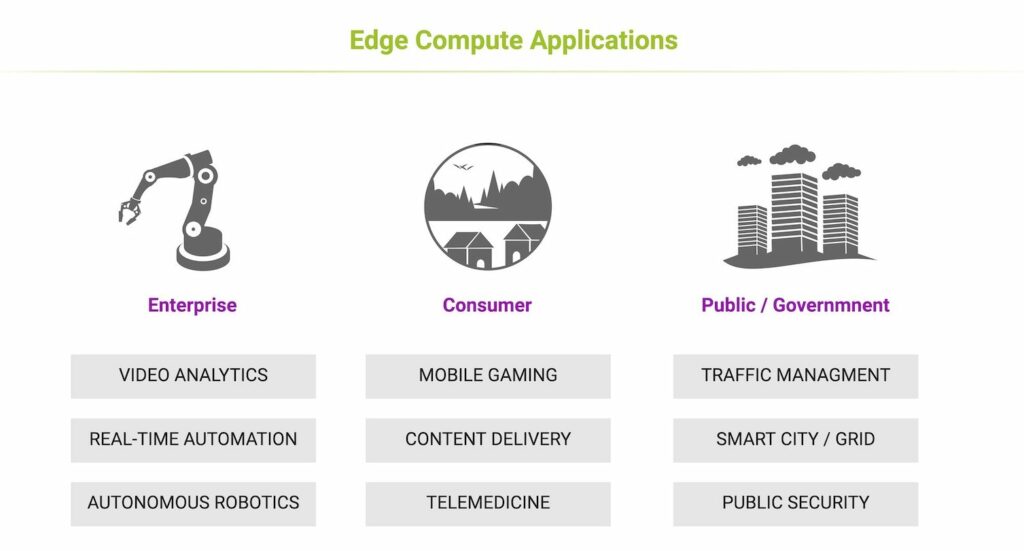

Because of this origin, edge compute was originally called Mobile Edge Computing (MEC) by the ETSI telecom standards body that defined it. However, as applications emerged for edge compute resources beyond network functions themselves — low-latency gaming, for example — it became clear that edge computing would become a multipurpose resource. ETSI has since redefined MEC as Multi-Access Edge Computing, recognizing a lucrative new business case for mobile operators: their ability to host localized workloads for enterprises and application developers.

While edge compute is still essential for 5G mobile networks, it is no longer exclusively associated with telecom applications. Edge computing has become synonymous with the Edge Cloud, reflecting its strong growth in enterprise and mission-critical applications.

Why is Edge Compute Needed?

Edge computing is primarily aimed at hosting low latency applications and workloads including AI processing for machine vision, video processing and industrial process control, critical public safety communications, connected and autonomous vehicles, and mission critical internet of things (IoT) applications. While latency is a primary performance essential, edge computing can also be employed to localize data storage and processing for security and data sovereignty in privacy-centric applications like healthcare and financial services. It’s also ideal where local cloud resources are needed in remote locations for mining, agriculture and military operations.

Key applications for edge computing also include video caching and delivery (CDN), real-time video communication and gaming, vehicle and air traffic management, remote patient monitoring and smart grids.

Where is Edge Compute located?

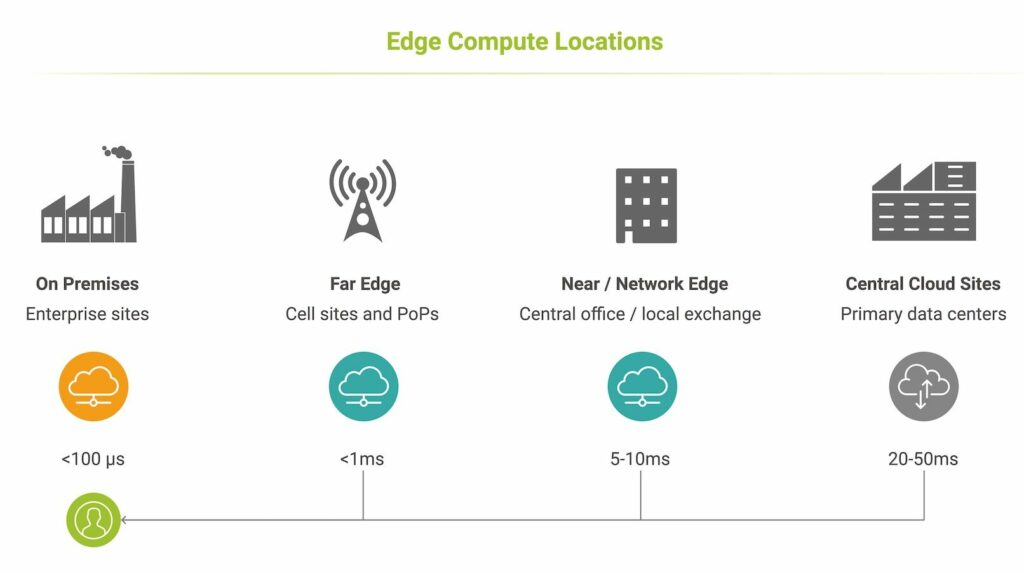

There are generally three kinds of edge computing, generally categorized by their distance—as measured in latency—from their clients (enterprises or end-users).:

- near edge / network edge,

- far edge

- and on-premises edge compute nodes, ≈

This classification is generally defined by distance — more precisely, latency. On-premises deployments offer the fastest response times and can supplement or replace private clouds and data centers, and are deployed directly on enterprise sites. Far edge compute is often hosted at cell sites or street cabinets, Near edge computing is typically deployed at telecom central offices, or regional data centers in a city or location addressing multiple provinces or states. These locations may coincide with hyperscale cloud data centers (for example, in Data Center Valley).

Edge compute nodes will become increasingly ubiquitous and located close to key industrial and financial centers and customers. Large cloud providers (Google, AWS, Microsoft, Alibaba) have many dozens of edge computing locations, while targeting several hundred more across Europe, North America within the next few years.

Who Owns Edge Compute Clouds?

Edge computing resources are offered by managed service providers, telecom service providers and public cloud providers from a diversity of locations that are aligned with fast, low-latency connectivity.

Increasingly, telecom service providers are partnering with major cloud providers to resell edge cloud services to their customers, or to consume them directly as part of their 5G network infrastructure. Conversely, cloud and managed service providers often depend on telecom providers for connectivity between edge compute and customers, and between their edge and hyperscale data centers. As a result, most national telecom providers lease hosting facilities to all major cloud providers.

As edge computing demand continues to accelerate at over 100% annually, “co-opetition” between managed, cloud and telecom service providers will continue to evolve, making this a complex space with new dependencies between management, hosting and network infrastructure.

How Do Enterprises Connect to Edge Computing?

Enterprises consuming edge compute need reliable, low-latency connectivity to the distributed edge clouds they depend on to host business critical applications and workloads. There are a number of connectivity options depending on location, edge compute provider and performance requirements. You can learn more about how latency drives digital performance in this article.

Public cloud providers partner with local service providers to offer direct cloud connectivity, and can leverage these partnerships to provide local access services to their edge clouds. Telecom service providers and regional ISP also provide low-latency routes to edge compute nodes within their footprints.

Converged wireless-wireline providers are in a unique position, as their mobile core network is typically connected to near and far edge compute locations serving their 5G networks. Some connect enterprises to edge compute resources through their mobile backbone, applying the same prioritization, routing, encryption and security policies to edge traffic as they apply to mobile traffic.

Other options include general internet access, dedicated private lines and private or public 5G mobile connectivity. Microwave connections are ideal for ultra-low latency applications including financial trading.

Enterprises can also connect to edge locations with SD WAN using any of these options as part of their network underlay. SD WAN policies need to be carefully configured and tested to ensure latency and throughput are sufficient for demanding edge applications.

Learn about how you can monitor hybrid connectivity performance here.